About Me

I am a PhD candidate in Data Science at the University of Virginia School of Data Science, where I am fortunate to work with Dr. Chirag Agarwal in the AIKYAM Lab. My research focuses on Trustworthy AI, with particular emphasis on Multilingual AI, AI Safety, and Multimodal AI.

Before starting my PhD, I earned an MS in Mathematics from the University of Tennessee at Chattanooga, where I worked with Dr. Lakmali Weerasena on facility location problems in mathematical optimization. I received my bachelor's degree in Mathematics with Economics (First Class Honors) from the University of Cape Coast in Ghana.

Current Research

I am currently studying chain-of-thought (CoT) monitorability under linguistic distribution shift, where I investigate how we can oversee and interpret the reasoning traces of large language models across different linguistic and cultural settings.

One of my recent works includes:

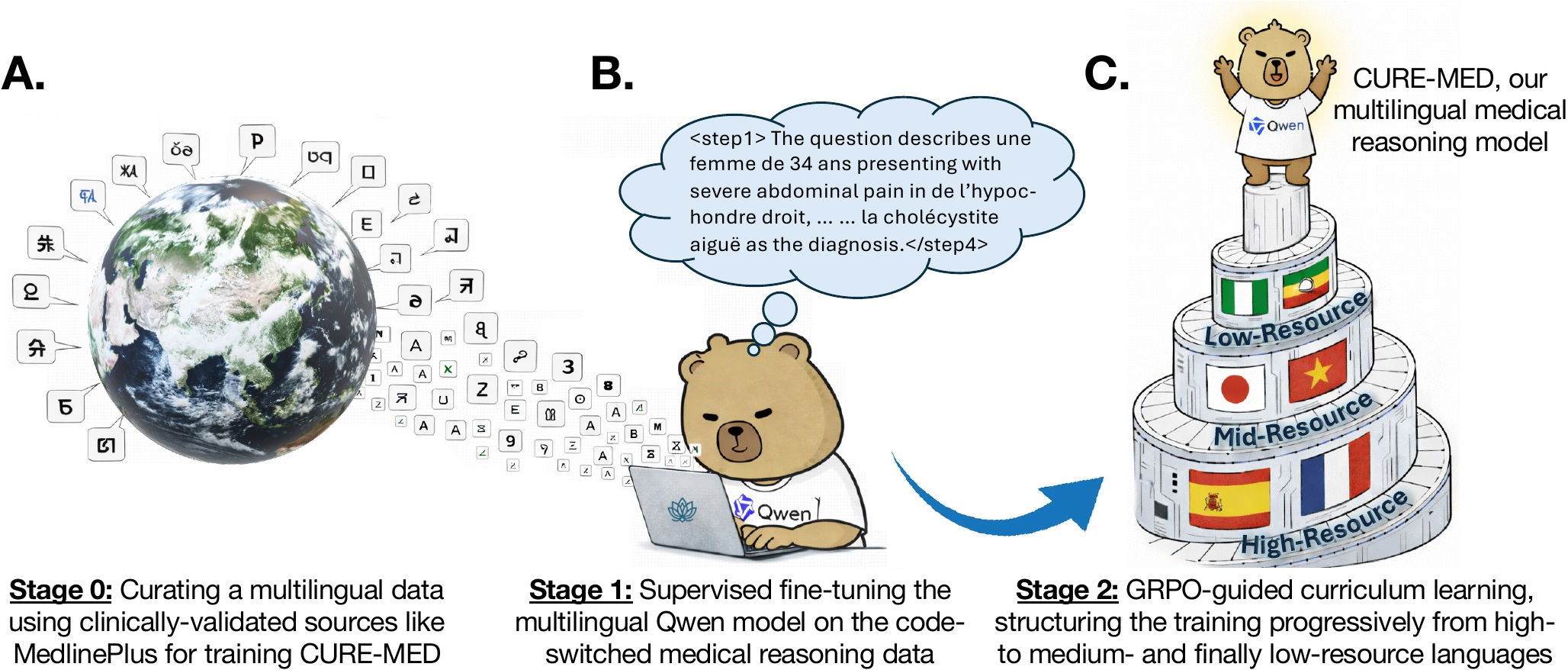

- Curriculum-informed reinforcement learning for multilingual medical reasoning (ACL 2026, Oral).

News

Recent updates and milestones.

Research

I spend most of my time thinking about how to make AI models more reliable and aligned with human values. I am broadly interested in Trustworthy AI, with a focus on building systems that are safe, robust, and worthy of user trust. I am motivated by a central question: how can we design AI models whose outputs are honest, harmless, helpful, and faithful to evidence across diverse users, languages, and contexts?

Recently, I have been especially interested in three directions:

How can we build models, representations, and evaluation pipelines that generalize across languages and cultures, while producing responses that are consistent, accurate, and high-quality in the user's language?

How can we detect and mitigate unsafe or misleading behaviors in advanced AI systems, including deception, manipulation, sycophancy, and scheming? I study these through interpretability-based methods, safety evaluations, and work on alignment and control.

How do these challenges extend beyond text to models that reason across multiple modalities? I am interested in making multimodal systems robust to distribution shifts, out-of-domain inputs, and safety risks in complex settings.

Publications

Selected publications and ongoing work.

Education

Academic journey.

Teaching

Teaching Assistant for core Data Science and AI courses.

Skills

Technical proficiencies.